Data integration is important for leveraging insights and innovation in organizations. However, dealing with large volumes, diverse sources, and complex transformations can be challenging. Enter Dataflow Gen 2, a part of Microsoft Fabric, simplifying the data integration process for streamlined insights and innovation.

Dataflow Gen2

Dataflow Gen 2 is the latest version of dataflows in Microsoft Fabric. It lets you easily transform and move data between different sources and destinations through a user-friendly interface. With over 300 data and AI-based transformations, it simplifies the process. Built with the familiar Power Query experience found in products like Excel and Power BI, it offers a low-code approach for efficient data handling.

Dataflow Gen 2 is capable of managing diverse data types, including:

- Structured data – CSV files, Excel files etc.

- Semi-structure data – HTML, XML, JSON etc.

- Unstructured data – audio, video, images etc.

Key features of Dataflow Gen2

- Streamlined Authoring – Shorter and simpler Power Query authoring with auto-save and background publishing.

- Flexible Data Destinations – Specify destinations like Fabric Lakehouse, Azure Data Explorer, Synapse Analytics, or Azure SQL Database.

- Enhanced Monitoring – Improved monitoring and refresh history for better dataflow management.

- Pipeline Integration – Seamless integration with data pipelines for orchestration and scheduling.

- High-Scale Compute – Leverages Spark clusters for faster and reliable data processing.

- Exactly-Once Processing (E1P) – Ensures data integrity by preventing duplicates and data loss.

- Self-Service Management – Empowers users with self-service dataflow management without extensive coding.

- Automation and Streamlining – Designed to automate and streamline data integration processes for improved reliability.

How to create a dataflow?

Follow these steps to create dataflow

Prerequisite

- Obtain a Microsoft Fabric tenant account with an active subscription. Refer Lesson 3 Getting started with Microsoft Fabric.

- Verify that you have a Microsoft Fabric-enabled Workspace set up and ready for use. Refer Lesson 4 Fabric Workspaces and how to create one?

Create a dataflow

- Launch https://app.fabric.microsoft.com

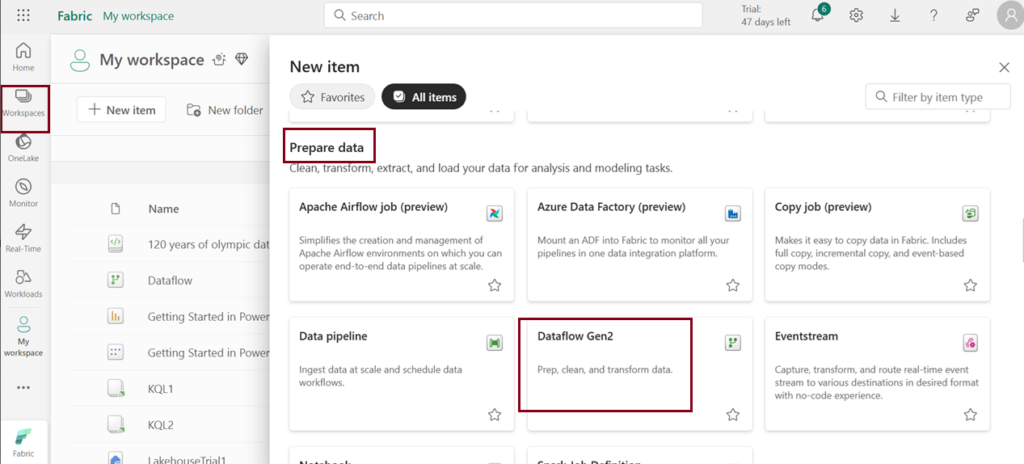

- Navigate to My workspace and click on Dataflow Gen2 under “New item”.

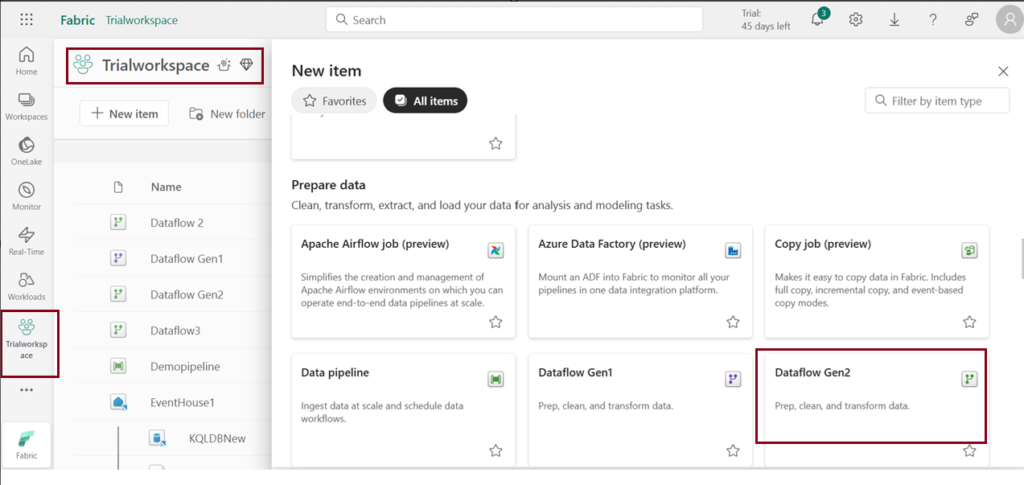

- You can also navigate through the workspace you created. Click on “New item” and choose “Dataflow Gen2”.

- Download the dataset given below and follow the steps below. The dataset is taken from Kaggle.

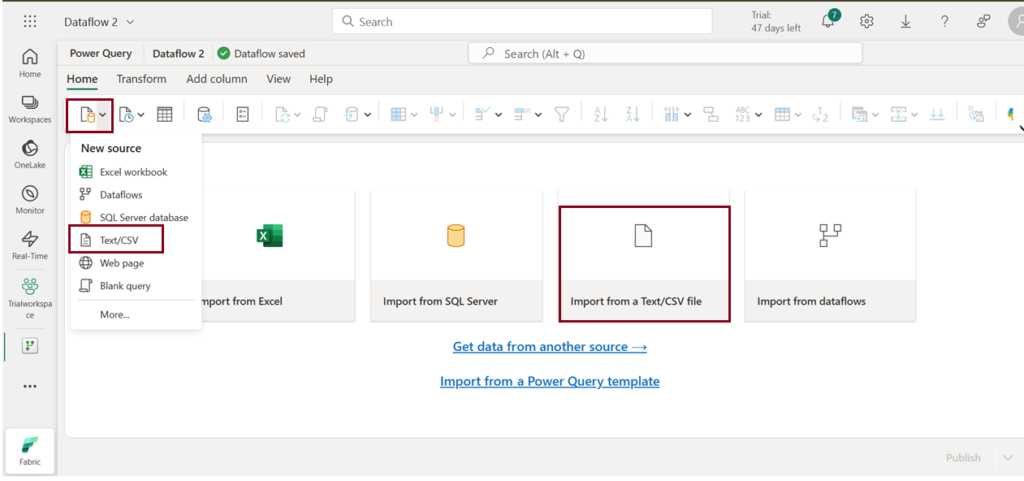

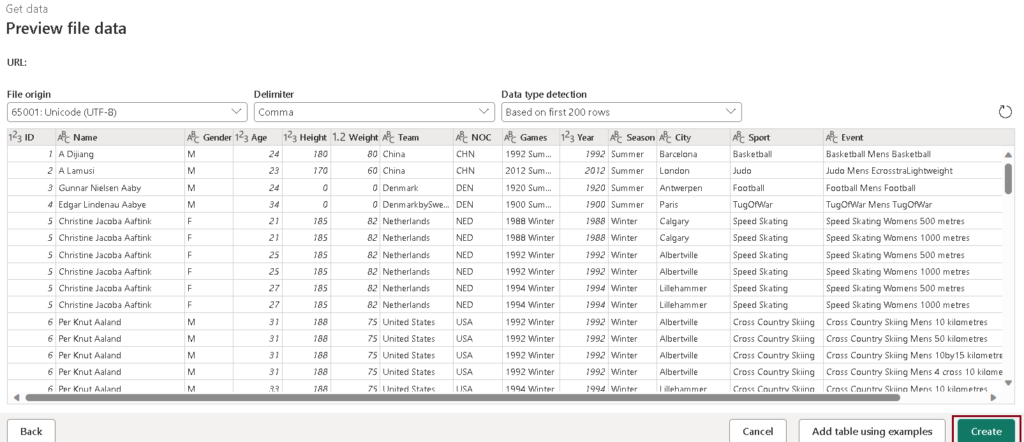

- Select Get Data –> Text/CSV or select Import from a Text/CSV file from home page of the editor to import csv file.

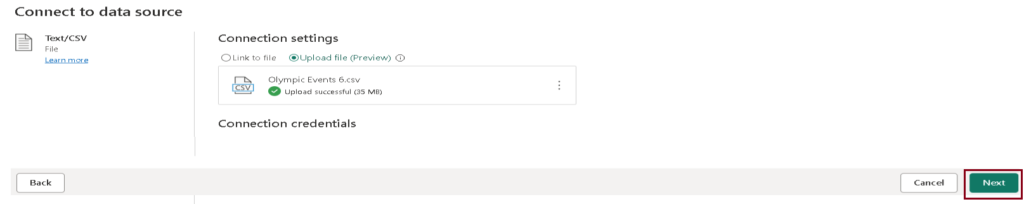

- Upload the given dataset and click next.

- View the table preview and click on “Create.”

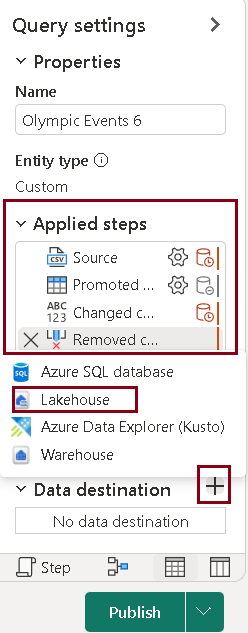

- In the query pane, the Applied steps display the dataset transformations. To add a destination, click the “+” symbol next to “Add destination” and select “Lakehouse.“

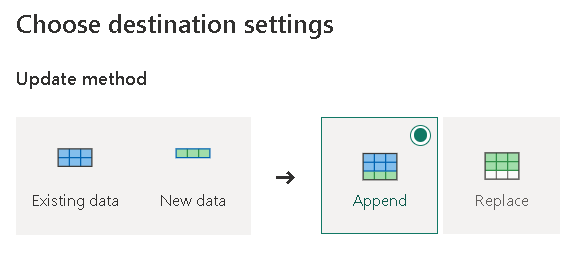

- Select the Lakehouse you want to store the transformed table and select the update method and save your settings.

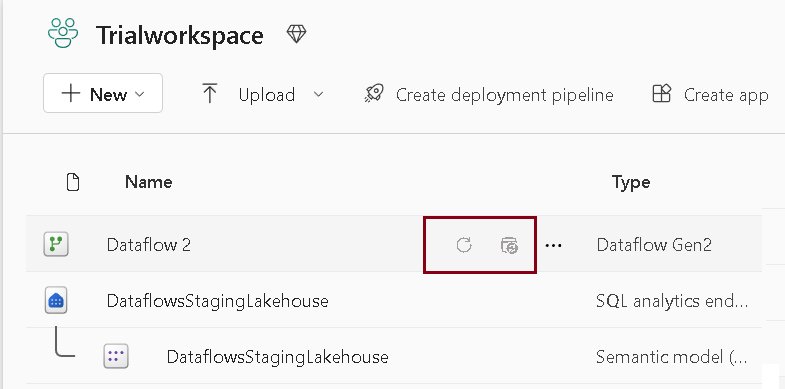

- Click Publish. Your dataflow has been published.

A refresh feature allows you to update your dataflow with the latest data from your sources. The schedule refresh feature automates this process at a specified frequency and time.

Note: When you create the first Dataflow Gen2 in a workspace, it sets up essential components like Lakehouse and Warehouse items. These parts, along with their related features, are shared across all dataflows in the workspace. It’s crucial not to delete them, as they’re vital for Dataflow Gen2 to work smoothly. While you won’t see them directly in the workspace, they may be accessible through other tools like Notebooks or SQL analytics. You can identify them by their name prefix, which is ‘DataflowsStaging.’

| Tags | Microsoft Fabric |

| Useful links | |

| MS Learn Modules | |

Test Your Knowledge |

Quiz |